Email marketing A/B testing is the systematic process of sending two or more variations of your email campaigns to different segments of your audience to determine which version delivers better results. With email marketing generating an average ROI of $42 for every $1 spent, optimizing your campaigns through strategic testing can significantly impact your bottom line.

Split testing eliminates guesswork from your email strategy by providing data-driven insights into what resonates with your subscribers. Whether you’re testing subject lines, call-to-action buttons, send times, or email design, A/B testing helps you make informed decisions that boost open rates, click-through rates, and conversions.

In this comprehensive guide, you’ll discover:

- What email marketing A/B testing is and why it’s essential for campaign success

- 15 critical elements you should test in your email campaigns

- How to avoid the biggest A/B testing mistakes that waste time and budget

- Proven best practices from successful email marketers

- A step-by-step process to set up and analyze your email A/B tests

- Advanced strategies to maximize your email marketing ROI

You may also enjoy:

1. Email marketing best practices and tips

2. Email segmentation best practices

3. 30+ inspiring email marketing campaigns

Why email A/B testing is essential for modern marketers

Email A/B testing isn’t just a nice-to-have feature but a competitive necessity. Here’s why savvy marketers prioritize split testing:

- Data-driven decision making: Instead of relying on assumptions about what your audience wants, A/B testing provides concrete evidence about which email elements drive engagement and conversions.

- Improved campaign performance: Regular testing typically leads to 10-25% improvements in key metrics like open rates and click-through rates, translating directly to increased revenue.

- Better understanding of your audience: Each test reveals valuable insights about subscriber preferences, helping you create more targeted and effective future campaigns.

- Competitive advantage: While many businesses send generic emails, those who consistently test and optimize their campaigns achieve superior results and stronger customer relationships.

Personal Note: “I hope this email finds you well.” How many times per week do you get an email starting with this sentence? I receive that greeting frequently, and I know it doesn’t work for me – which is exactly why I’d never use such a generic opening in my email campaigns.

This gut instinct about obvious email mistakes is valuable, but what about subtler elements like subject line length or call-to-action placement? That’s where systematic A/B testing becomes invaluable.

With GetResponse Email Marketing Software, you can design, run, and optimize your email campaigns with ease. Start from scratch or use one of our 100+ predesigned email templates and customize it to match your branding. And when you’re done, finding that winning subject line with our built-in A/B tests will be as easy as ABC! Create your free account today!

What’s your excuse?

There are several misconceptions and myths about email A/B testing, and it’s time to get rid of them once and for all.

Oftentimes, solopreneurs, small business owners, and even marketing managers in rising startups tend to overlook split testing because of:

- Limited resources – people believe email testing is only possible with a solid budget and a dozen folks in their marketing department. In reality, it won’t cost you an arm and a leg, and you can easily do it, even if you’re a team of one. Later on, we’ll explain how.

- No time – too many tasks and projects, and most likely believing that A/B testing will be a time-suck. False! It’s way easier than you think, and automation can help you streamline the process.

- Lack of know-how – the idea of running tests may seem too challenging to execute. The truth is, it doesn’t require an engineering skill set and experience. And even if you previously conducted some tests that led to nothing, there’s always room for improvement.

Once you know it’s not rocket science, it’s time to learn which variables of your emails and newsletters you should test.

What should you test? 15 elements to put on your list

Whether you plan to send out your newsletter, an onboarding email, or a Black Friday promo, each message consists of multiple elements. And each of those can positively or negatively impact your campaign numbers.

Let’s break down the email structure.

1. From name

Take a look at the new messages in your inbox. One of the first – if not the first – things you pay attention to is the name of the person or a brand who sent you an email.

This is a screenshot from my Gmail. As you can see, I’m subscribed to many websites, which means I receive tons of newsletters and offers.

Have a look at three different approaches:

- Using the name of a real person and the company they work in – “Ognjen from CXL.” They practice that because it can add some personal touch, which may increase the open rates because the recipients might feel more appreciated. It works perfectly if you have an established personal brand, but if not, it’s a good idea to test this approach.

- Using a company name but adding the word “team” – “The Miro Team.” They could have used plain “Miro” because the brand is recognizable, but making their impression with a fantastic group of people behind the brand instead of the brand name by itself might have worked out better for them.

- Using a company name but with different second words – “Grammarly Premium,” “Grammarly Insights.” The reason for that is to segment their types of messages. Whereas “Grammarly Insights” wants to give me a pat on the back for using their tool and sharing my achievements, “Grammarly Premium” wants to upsell me with a 40% discount.

2. Subject line

This element is even more crucial than the name of the sender. Your email content can be stunning and persuasive, but if you don’t manage to entice people with a catchy subject line, it’s all gone.

Let’s take another look at my inbox, but this time, let’s see the subject lines:

👉 This guide explains how to write catchy email subject lines.

Here are some of the variables that you can experiment with:

- Length. A long or short subject line? As you can see above, some are three to five words, and some are more extensive. The rule of thumb is not to exceed 50 characters. Do you know Brian Dean, the founder of Backlinko and Exploding Topics? A whopping 173,674 people subscribe to his SEO newsletter. Brian says that very concise subject lines delivered him the highest open rates. You can see what they look like below.

Please note, however, that Brian’s personal brand is massive, so what has worked for him doesn’t have to work for everyone. That’s why the bottom line is – test, test, test.

- Numbers. There are at least two types of emails where you can play with the numbers in the subject line and see if they moved the needle. Number one: promo emails – Black Friday, Back to School, Christmas, 30%, 40%, 50% work their magic. Number two: case studies – “10K creator podcast origin lessons.” They have the potential to balloon your open rates, but it will depend on the message and industry.

- Capital letters. There are different methods here. Some email marketers avoid capitalization, and others capitalize the beginning of every word or ONLY the word they want to emphasize the most:

3. Preheader

Preheader is the third element that people see in their inboxes without opening an email. So, it’s another factor that influences the recipient’s final call: “to open or not to open?”

While subject lines force you to be laconic regarding wording, preheaders give you more freedom to share the context of your message. Think of them as the subtitle of your blog article, telling something more about the content than 3-4 words in the subject line.

Here’s a solid preheader from Uncommon Goods newsletter:

4. Email design

OK, so we’re inside the email now. Think about your inbox again – what kind of emails do you receive? Visually rich or text-focused? Which format resonates with you most?

There are no right or wrong answers here. The design of your email will depend on several factors, such as:

- Whether you’re operating in the B2B or B2C reality

- What type of email is it: newsletter, onboarding, webinar invitation, special offer, etc.?

- What’s the business goal?

Typically, B2C emails are image-heavy, with limited copywriting. B2B emails, on the other hand, put more emphasis on text content – they are sometimes only limited to text and sometimes use a combination of imagery and words.

Plus, the essential element of the email structure is the call-to-action button.

Taking it all into account, there are five elements of the email you need to test:

- Amount of text

- Number of images

- Placement of images

- Number of CTAs

- Placement of CTAs

5. Copywriting

The visual part is vital, but when the messaging doesn’t resonate with your target audience, you can forget about the high number of click-through rates. People will bounce back to their inbox homepage and start checking other emails.

The way you write your emails must align with your:

- Brand messaging

- Tone of voice

- Your customers’ or subscribers’ pains and gains

What you can test regarding your email copywriting is the length of your emails, the angle, and the word choice.

6. Call-to-action

This is the bottom line of every email. It comes in the form of either hyperlinked text or a good-looking CTA button. Whether your goal is to direct your subscribers to your blog content or to increase sales, you need to nail it with just a few characters.

Which CTA drives more results? A solid A/B test will tell you that. And here are the five elements of a call-to-action that you can test:

- Copy

- Color

- Placement

- Design

- Text vs. image button

When selecting the most impactful words for the CTA, you can check out what other brands have used. You will come across action-oriented copy, such as: Buy Now, Start Your Trial Today, Save Your Seat, Download, or something more generic: Read More, Learn More, etc.

As for the design part, our tip is to place a CTA on a white background or surrounded by contrasting colors.

Here are some real-life examples of compelling CTAs:

7. Time of day and the day of the week

The best timing for sending emails has been puzzling marketers and small business owners for at least a decade. How to strike that ideal moment when your subscribers’ will say, “OK, now I have a few spare minutes to check what they sent me”?

According to GetResponse’s 2022 Email Marketing Benchmarks report, the answer is not that simple. After analyzing key regions – US & Canada, LATAM, DACH, CEE, and Asia-Pacific, it turned out that:

- There are two significant peaks of open rates: one very early in the morning.4 AM, and the second around 6 PM. However, between those two reference points, there is a stable, high level during business hours.

- Click-to-open rates correspond with the above, with some other hikes at 6 AM and 9 AM.

- Click-through rates slightly increase at 4 AM, 6 AM, and 6 PM.

Considering all that, we hypothesize that sending your emails at 4 AM, 6 AM, 9 AM, and 6 PM would potentially bring you the best results. However, we highly encourage you to validate that. And the best option to do that is to carry out tests.

OK, so we know the hours, but what about the days of the week? Here, it gets trickier:

- Open rates plateau during the entire business week

- Click-to-open rates tend to be a bit more successful on Mondays, Wednesdays, and Fridays

- And CTRs at the very same level from Monday to Friday

Pro tip: Try out GetResponse’s Perfect Timing and Time Travel delivery tools, and find a sweet spot for your next email marketing campaign.

8. Frequency

After figuring out the “when,” it’s time to think about “how often.” The challenge here is to strike a balance between achieving your marketing and business goals and flooding your subscribers with too many emails.

Remember that even famous brands struggle with subscribers opting out because of hijacking their inboxes repeatedly. So, you must be cautious with planning your campaigns and give breathing space between the send-outs.

The good news is you can recognize when you overdo the email channel – tracking the unsubscribe and complaint rates can help.

9. Opt down

Some of your subscribers may decide to abandon you, even if you’re not very aggressive with the email frequency.

As much as having an unsubscribe link in your emails’ footer is required by law, it’s even better to also provide your recipients with an opt-down option. This way, you will give them control over what kind of messages they still want to receive from you and which ones are out.

OK, so they will say “no” to some of your email blasts, but hey – you will prevent them from unsubscribing.

10. Offer

Now, let’s talk about the essence of your email – your content. It’s all going to depend on the email type. A newsletter will have a different structure, more links, and a different goal than a product launch email or a promo code sales push.

Depending on the type, you will be building the content architecture differently, with unique hypotheses to test in each of them. For instance:

- Your Black Friday hypothesis will be – if we offer a 40% discount on our products, we will sell X number of (whatever you sell).

- Your “what’s new” product email hypothesis will be – if we embed a video at the top, it will increase the CTR by X%.

11. Hero shots and images

Humans love visuals. Period. Images help to catch people’s attention and absorb more from the content. It applies to email marketing as well.

Although B2B newsletters are often based entirely on text (and it works perfectly fine for them), and imagery is associated mainly with B2C and ecommerce, we highly encourage you to test visuals’ impact, regardless of the business type or industry.

Experiment with various types of images: photos, unique illustrations, testimonial quotes graphics, or stock vector art, and see how each of them influences engagement.

12. Mobile design

Another aspect of testing in your emails boils down to checking if the mobile version works well. If it loads too slowly, or there are issues with the responsiveness of the design or the ability to click the CTAs, that should be one of your priorities.

Sending newsletters with a flawed mobile version will hurt your campaigns’ bottom line. With today’s users’ attention span and how smothered with marketing communication they are, everything in your email needs to work perfectly – especially on their smartphones.

You have just seconds to win them over!

More on this in our Email Design Guide.

13. Landing pages

This is not a part of testing emails per se, but making sure that the whole architecture won’t fail you. You’ve tested your subject line, content, and mobile design? Great! But it will all fall apart if the landing page is not optimized.

There are several aspects of landing pages you need to pay attention to. Make sure your landing page is:

- Not cluttered with too many elements

- Simple and clear in messaging

- The action you want your visitors to take is not confusing

- Optimized for mobile users

14. Social media links

Your subscribers are probably also your followers on social media. But they don’t have to be. So, growing your email list can sync with building up your organic social audience.

Find a way to entice them to follow you on social media with a tangible value that you’re going to share on Twitter, LinkedIn, or Facebook that won’t be a repetition of what you’ve already shared via newsletters.

What you can test here is the placement and the design of social media buttons in your emails.

Here are a couple of examples:

15. Footer

The social media buttons we touched upon above are the most common elements of emails’ footer.

What else can you inject in the bottom section of your emails?

- Links to previous newsletters and other content

- Opt-down and unsubscribe links

- Terms of service

- Logo linking to your homepage

While the footer is not a critical factor that can change any numbers drastically, you can test if any design or content change over there triggers any ups and downs in the engagement.

25 ways to build your contact list

We’ve compiled a list of 25 tried-and-tested tactics for the success of your future campaigns.

The biggest A/B testing mistakes and how to avoid them

Once we have taken a deep dive into what to test, it’s time to zoom into the most common pitfalls of email A/B testing.

1. Stopping the test too early

Yes, the pace of marketing is crazy. Campaigns need to be done fast. Observations, conclusions, retrospectives? No time for that!

☝️

Please do NOT fall into that trap. Even though it might be enticing to end the test as soon as you get preliminary positive results, doing that will leave you with incorrect data.

What to do instead

First, define a sample size before you launch your A/B test. Our default and recommended structure of the audience breakdown is as follows:

- 25% receive the A version

- 25% receive the B version

- 50% receive the winning version

Second, you need to set up the testing time. If you don’t give your recipients enough time to receive your message, it will most likely distort the credibility of your data.

The default in GetResponse is one day, but you can adjust it to your preferences and give your subscribers two or three days more or trim it down to a couple of hours.

Pro tip: According to data from the Email Marketing Benchmarks report, more than 50% of your emails will get opened in the first six hours of sending.

2. Testing without a hypothesis

The second pitfall of A/B testing is about running tests without a hypothesis or with a vague hypothesis. Without a strategic vision of what you will test, you won’t be able to draw conclusions for a particular test and future campaigns.

What to do instead

Formulate a hypothesis based on this structure:

“If we change this variable, it will affect this metric.”

But you need to be specific. Setting an unclear premise won’t be efficient. An example of an explicit statement should be like this:

“Changing the subject line from ‘Back to School’ to ‘40% off on your annual plan’ will make the message more direct and increase open rates from 15% to 25%.”

3. Not testing on a regular basis

Even though it might be tempting to test your emails a few times and take for granted that you have a silver bullet that will work in every campaign, taking shortcuts is risky. The business landscape changes, and so does your target audience. What works today might not work in a couple of months.

What to do instead

Set your A/B testing in stone. Ideally, create an SOP or a workflow where testing is an unremovable pillar of every email marketing campaign.

4. Ignoring statistical significance

Sometimes one of the variants wins, but it doesn’t mean that it would generate an uplift if you were to repeat the test. Why? Because the results were statistically insignificant, because the audience sample was too small or the difference between the results they generated was too minor.

What to do instead

Use an A/B testing calculator, like this one from CXL, to ensure your test generates statistically significant results. And in case your audience is too small to run such a test in one go, consider carrying out the test several times across a longer period of time, and analyzing your aggregate data afterward.

10 best practices for running successful email A/B testing

Once you’ve learned what to avoid, it’s time to get your hands on the best practices:

1. Start simple

Making things overly complicated at the beginning can lead to confusion. It’s better to start testing something simple yet meaningful – such as a subject line.

2. Test only one element at a time

You want to be sure which variable made a difference for higher open rates and click rates. So always compare “apples to apples” – subject line to subject line, CTA text to CTA text.

3. Choose the same time and day

Sending emails on different days of the week and hours and comparing them will give you a headache with the analytics. If you decide to go for Tuesday at 9 AM, stick to it.

4. Keep records of your tests

Regular documentation is essential to get a bird’s-eye-view of your testing history. Not only will it help you get back to the results of your previous tests quickly, but it will guide any new hire to do this job.

5. Test representative segments

The whole idea of testing before going full scale is to test the waters with a smaller sample representing your entire audience. Not too small, not too big. You can try 10% or 25%.

6. Make testing your routine

If you are serious about leveraging an email marketing program, you need to conduct A/B tests regularly. Monitor your results, iterate, and make data-based decisions.

7. Don’t rush with the analytics

Each email campaign has different dynamics, so it might take more than a few hours to get reliable results. Wait for it. The engagement can radically change within the first 48 hours.

8. Learn from your tests

Your A/B testing can be a moment of truth for your assumptions. Use the results to improve your messaging and content structure to hit home next time.

9. Understand what you’re testing

Let’s say you’re testing two subject lines. But both consist of several nuances, and each of them alone can trigger your audience – wording, length, emoji, a number, etc.

10. See the big picture

Keep your finger on the pulse, not only for open rates and CTRs. Of course, observing them is great, but you must also monitor complaints and unsubscribe rates.

How to set up email A/B test in 9 easy steps

Now, let’s walk through the A/B testing creation process in GetResponse.

Step 1. Go to the A/B test tab in the Email Marketing dashboard, and click the “Create A/B test” button.

Step 2. Decide whether you want to test the subject line or the content of your email. Select your option and hit the “Create test” button.

Step 3. Name your test, and define your “From” name email address.

Step 4. Enter (up to 5) subject lines you want to test, plus a preview text.

Note: This option is available only if you chose the subject line test in step 2.

Step 5. Add recipients from your created lists or segments.

Step 6. Design your email content.

Here, you have a few options to choose from. If you don’t feel like an artist or a designer, you can pick one of our predesigned email templates:

But if you want to build something unique, go to the Blank templates tab and start creating your email from scratch:

And if you’re more advanced than that, the HTML editor option will do the trick!

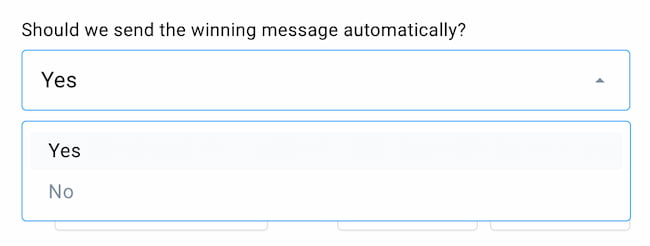

Step 7. Configure your A/B test

First, define your sample (we touched on that before). Use the slider to set up what percent of your subscribers will receive each of the email variations and how large the cohort would be that receives the winning version of your email.

Once you do that, decide whether or not the winning message will be sent on autopilot.

Then, select the winning criteria – open rate or click rate.

Next, decide how long the test should last – hours or days.

Click “Save” once you have everything set up.

Step 8. Decide when to send your email

Immediately?

Or at a predefined date?

Step 9. Save draft or schedule your A/B test

You can either save it all as a draft and come back to it later or schedule it on the spot.

After you’ve run a successful email AB test, here’s what the report looks like:

Your turn

Great! We reached the end of your ultimate guide for email A/B testing! Now you know:

- What email A/B testing is, and why it’s crucial for your business

- What elements of email architecture can you test

- The biggest mistakes of email A/B testing and how to solve them

- And how to set up your A/B testing with GetResponse.

Now it’s time to implement all that know-how in your nearest email blast. New to GetResponse? Create a free account today and discover your new email marketing toolkit.